|

|

|

|---|

IUPUI-CSRC Pedestrian Dataset

About

Prediction of pedestrian behavior is critical for fully autonomous vehicles to drive in busy city streets

safely and efficiently. The future autonomous cars need to fit into mixed conditions with not only

technical but also social capabilities. It is important to estimate the temporal-dynamic intent changes

of the pedestrians, provide explanations of the interaction scenes, and support algorithms with social

intelligence.

The IUPUI-CSRC Pedestrian Situated Intent (PSI-1.0) benchmark dataset has two

innovative labels besides comprehensive computer vision annotations. The first novel label is the

dynamic intent changes for the pedestrians to cross in front of the ego-vehicle, achieved from 24

drivers with diverse backgrounds. The second one is the text-based explanations of the driver reasoning

process when estimating pedestrian intents and predicting their behaviors during the interaction period.

PSI-2.0 covers 196 scenes including 110 scenes from PSI-1.0 and contains the labels from 74 new subjects.

Additionally PSI-2.0 includes bounding box annotations for traffic objects and agents which can

be linked with text descriptions and reasoning explanations for building vision-language models.

These innovative labels can enable computer vision tasks like pedestrian intent/behavior prediction,

vehicle-pedestrian interaction segmentation, and video-to-language mapping for explainable algorithms.

The dataset also contains driving dynamics and driving decision-making reasoning explanations.

PSI-2.0

196

Total Number of Scenes

There are 196 unique pedestrian encountering scenes.

79,837

Total Number of Annotated Frames

Number of frames annotated with 682,378 bounding box annotations of traffic objects and Agents. 71,259 unique pose estimations for 391 pedestrians using MS COCO format

987k

Total Number of PSI Estimation

More than 987k estimations are made by 74 human drivers for 373 key pedestrians’ situated intents.

5,773

Total Number of PSI Segmentation Boundaries and Driver Reasoning Explanations

Boundaries identified by human drivers when segmenting the 196 scenes based on the key pedestrians’ situated intents and the corresponding reasoning explanations. (29.45/scene)

PSI-1.0

110

Total Number of Scenes

There are 110 unique pedestrian encountering scenes.

25,881

Total Number of Annotated Frames

Number of frames annotated with object detection and classification, tracking, posture, and semantic segmentation labels.

621k

Total Number of PSI Estimation

More than 621k estimations are made by 24 human drivers for the key pedestrians’ situated intents.

5,473

Total Number of PSI Segmentation Boundaries and Driver Reasoning Explanations

Boundaries identified by human drivers when segmenting the 110 scenes based on the key pedestrians’ situated intents and the corresponding reasoning explanations

Demos

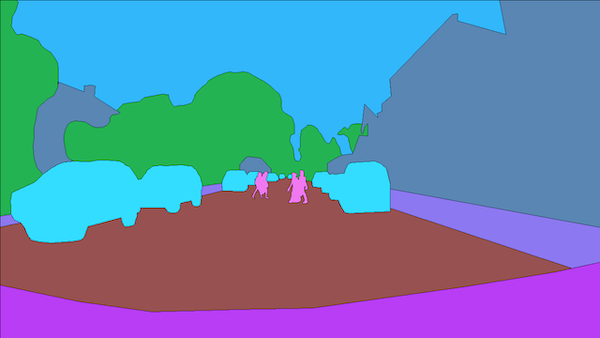

Vision-Based Annotations

Object Detection

Semantic Segmentation

Demo Video